Part 1: What Does It Mean to Trust AI?

How trust operates in complex socio-technical systems

Post 1 of 5 — Trust in AI Series

Trust is a deceptively simple word. We use it easily in human relationships, yet the moment we apply it to AI, the concept becomes layered, psychological, and structurally complex. It is no longer just an interpersonal intuition — it becomes a cognitive strategy for acting under uncertainty, a design outcome, and a governance challenge.

To understand what it means to trust an AI system, we need to start with its psychological foundations. At its core, trust is a willingness to be vulnerable. It is a psychological state in which a person chooses to accept uncertainty because they believe the other party — whether human or machine — will act in ways that align with their goals. That belief matters more than algorithmic mechanics.

In daily life, trust allows us to move through a world of imperfect information. In socio-technical systems, it is the mechanism that lets humans delegate tasks to machines that operate through internal processes which are opaque, probabilistic, and constantly evolving. Without trust, AI adoption stalls. With misplaced trust, it becomes dangerous. Understanding the psychology underneath is not optional — it is the foundation of responsible deployment.

1.1 Trust vs. Reliance: A Critical Distinction

One of the most persistent misunderstandings in AI adoption is the assumption that usage equals trust. In reality, people can rely on technology without trusting it — conflating the two creates significant blind spots.

Reliance is a behaviour. You follow an AI’s suggestion because you must — due to limited alternatives, workload pressure, or institutional expectation. Trust is an attitude. You believe the AI will help you achieve your goals, and you accept vulnerability because you expect alignment between the system’s outputs and your interests.

A clinician who uses an AI diagnostic tool because policy requires it is relying on the technology, not necessarily trusting it. A commuter who accepts a navigation route because it usually gets them there fastest is demonstrating trust. When we conflate these two things, we risk misreading compliance as confidence and adoption as endorsement. The danger is real: compliance without trust is fragile. The moment something goes wrong, the behaviour collapses. Trust, by contrast, is resilient — it supports long-term integration, but only when it is justified.

1.2 Trust vs. Trustworthiness

A second distinction is equally important. Trustworthiness is an attribute of the system itself — its objective properties of competence, safety, fairness, robustness, and privacy. Trust is the user’s subjective perception — their confidence, expectations, and willingness to rely. These two do not automatically align.

A trustworthy system can fail to earn trust if users lack understanding, fear automation, or distrust the organisation deploying it. And an untrustworthy system — one that is biased or poorly tested — can gain high trust if it appears confident, human-like, or widely endorsed. This asymmetry is exactly why trust must be designed and governed, not merely hoped for.

1.3 Do We Trust AI Like We Trust Humans?

Traditional theories of interpersonal trust emphasise three dimensions: ability (competence), integrity (honesty and consistency), and benevolence (good intentions). AI has no motives, yet people ascribe intentions based on design cues, communication style, and institutional context. A conversational AI that uses warm, empathic language can be perceived as caring. A system that emphasises privacy can generate a sense of benevolence. A model that gives consistent outputs feels honest.

This is not irrational. Humans are wired to interpret agency even when agency is not real. The cognitive tendency to anthropomorphise is one of the most powerful drivers of AI trust formation — and one of the riskiest, because warmth illusions can mask competence failures. Researchers increasingly treat trust in AI and trust in humans as distinct psychological constructs, but the two share enough in common that the boundary matters: with AI, most assumptions about capability and intention are inferred rather than observed.

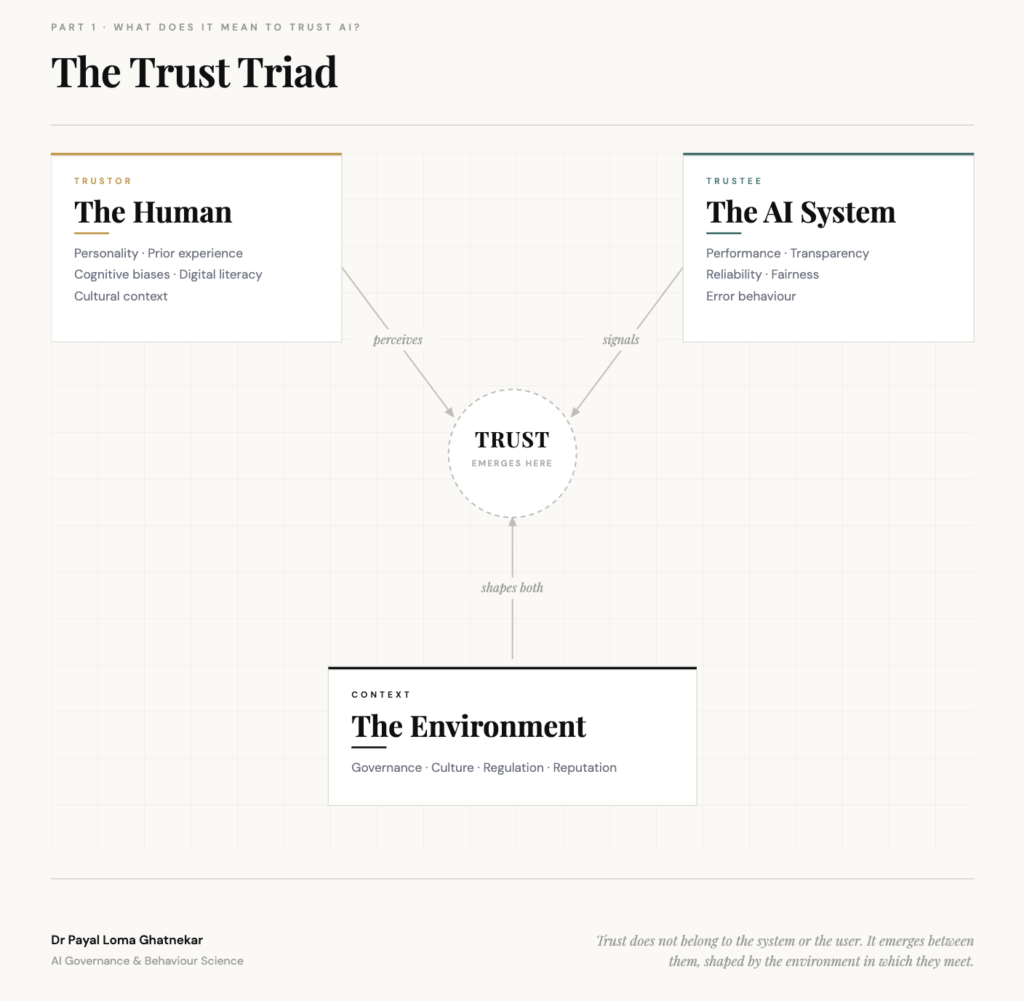

1.4 The Triad: Trustor, Trustee, and Context

A robust framework for understanding AI trust requires three elements working together. The trustor — the human — brings personality traits, prior experiences, cognitive biases, digital literacy, and cultural context. Trust is never purely about the machine; it is always filtered through the human mind.

The trustee — the AI system — communicates its trustworthiness through performance, transparency, reliability, fairness, and error behaviour, intentionally or not. The interactive context — the environment — encompasses organisational culture, governance structures, policy frameworks, and institutional reputation. People trust AI less when they distrust the organisation behind it, a pattern consistently demonstrated across healthcare, banking, and public-sector deployments.

Trust does not belong to the system or the user. It emerges between them, shaped by the environment in which they meet.

1.5 Why Calibrated Trust — Not Simply ‘Building Trust’ — Is the Real Goal

AI adoption is no longer limited by technological capability. It is limited by cognitive fears, lack of clarity, perceived loss of control, institutional mistrust, uncertainty about accountability, and discomfort with black-box reasoning. When trust is low, even high-performing systems are rejected. When trust is high but misplaced, systems are misused.

This is why building trust is not the goal. The real goal is calibrated trust — trust that is proportional, evidence-based, and matched to real capability. Calibrated trust makes people feel safer relying on the system, allows errors to become learning opportunities rather than disasters, enables institutions to demonstrate accountability, and supports users in maintaining agency rather than feeling overruled. Rapid adoption requires psychological confidence. Responsible adoption requires technical integrity. Calibrated trust requires both.

Research evidence

Trust in AI and trust in humans are psychologically dissociable constructs — they do not strongly correlate. This means AI governance cannot simply borrow the interpersonal trust models developed to understand human relationships. A distinct framework is needed. Montag et al. (2023). Trust toward humans and trust toward artificial intelligence are not associated. PMC.

Trust in AI is not a single variable to optimise. It is the outcome of complex interactions between human psychology, machine characteristics, and institutional context. Only by understanding these three layers — trustor, trustee, and context — can we design systems that people will adopt quickly and rely on responsibly.

Continue the series

Prefer to read the whole series in one go? Download the full white paper

Download the white paper →