Part 4: What Institutions Must Do

Oversight, governance, and the systemic conditions for justified trust

Post 4 of 5 — Trust in AI Series

Trust that emerges from warm interfaces or persuasive design alone is fragile. It collapses the moment something goes wrong. Sustainable trust — the kind required for healthcare, public services, or enterprise AI adoption — depends on context: the governance structures, oversight mechanisms, organisational culture, and regulatory expectations surrounding the technology.

No AI system exists in isolation. A model is always embedded within an organisation, a workflow, a set of responsibilities, and a legal and ethical framework. Trust in AI is therefore not only a question of ‘do I trust the system?’ but of ‘do I trust the institution deploying it? Do I trust that it is monitored? Do I trust that someone is accountable if something goes wrong?’ Institutions shape the conditions under which trust can be justified — and without those conditions, even technically excellent AI cannot earn durable confidence.

4.1 The Three Pillars of Trustworthy AI

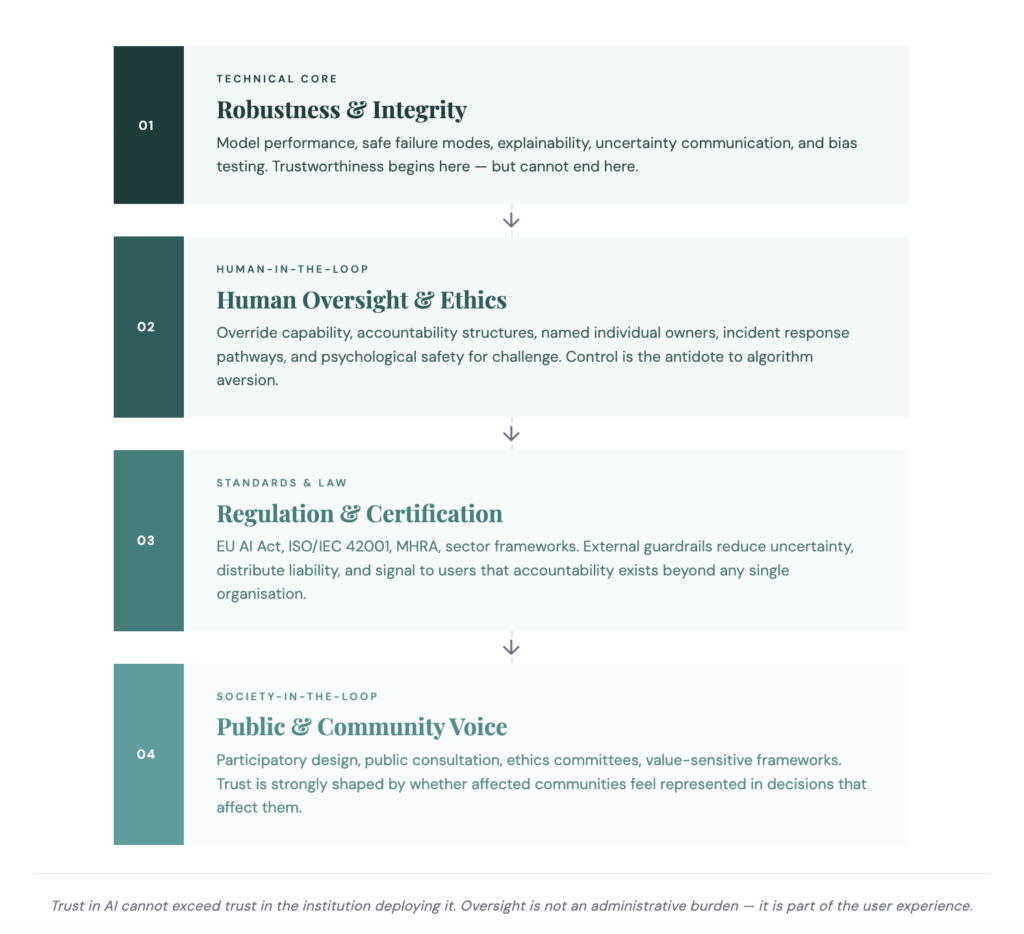

Regulatory bodies worldwide have converged on a common foundation: AI systems must be lawful, ethically sound, and technically robust. Lawfulness requires compliance with data protection frameworks, patient rights, discrimination protections, and sector-specific regulation. Ethical soundness requires upholding fairness, respect for autonomy, transparency, and non-maleficence. Technical robustness requires reliable behaviour across conditions, resistance to manipulation, and safe failure modes.

These pillars are only meaningful when institutions operationalise them — not as documentation exercises but as lived practice embedded in how decisions are made, how incidents are handled, and how accountability is assigned.

4.2 Accountability and Auditability

Trust collapses when people are unclear about who is responsible when something goes wrong. Users need to know who built the system, who validated it, who monitors it, who intervenes when it fails, and who is liable when harm occurs. Accountability is as much a psychological factor as a procedural one: when organisations demonstrate clear ownership of AI decisions, users feel protected even in the presence of error.

Auditability is the structural mechanism that makes accountability visible. It requires documentation of model development, traceable decision flows, version control, monitoring for performance drift, explainability mechanisms, and escalation pathways. Critically, auditability must exist before deployment — to ensure systems are safe — and throughout deployment — to ensure systems remain safe. Without robust auditing, even well-performing systems struggle to earn trust, because the chain of accountability is invisible to users.

4.3 Human-in-the-Loop and Society-in-the-Loop

Human-in-the-loop (HITL) is frequently misunderstood as a bureaucratic checkbox. In practice, it is a psychological safety mechanism. The ability to intervene, override, or correct a system is one of the strongest predictors of user trust. HITL ensures that decisions are reviewable, users retain control, and responsibility is genuinely shared. In high-stakes environments — healthcare, justice, finance, social care — this is not optional.

Society-in-the-loop (SITL) extends oversight beyond individual users and domain experts to include communities, public stakeholders, and affected groups. It shifts the question from ‘is the system safe?’ to ‘is the system aligned with societal expectations?’ Participatory design, public consultation, ethics committees, and value-sensitive design frameworks are all expressions of SITL in practice. Trust is strongly influenced by whether people feel represented in decisions that affect them.

4.4 The Multi-Stakeholder Trust Chain

AI governance involves an interconnected network of actors: developers, data scientists, product managers, clinicians, domain specialists, leaders, regulators, auditors, end users, and those affected by AI decisions. Each brings different priorities. Developers focus on performance. Regulators focus on safety. Leaders focus on cost and efficiency. End users focus on reliability and usability. Decision subjects focus on fairness and agency.

When one stakeholder’s needs dominate or responsibilities are unclear, the chain fractures and trust erodes. Governance must coordinate across the entire chain — not simply regulate isolated parts.

4.5 Organisational Culture as a Hidden Determinant

Even the best technical safeguards fail when deployed within an organisation characterised by unclear communication, opaque decision-making, inconsistent policy, fear-driven leadership, or a history of digital transformation failures. AI inherits the culture around it. A culture that avoids accountability will deploy AI that avoids accountability. A culture that values integrity will deploy AI that signals integrity. Trust in technology cannot exceed trust in leadership.

4.6 Regulation as a Trust Signal

Internal governance is essential but not sufficient. People expect external guardrails — independent verification, global standards, certification, and proof of enforcement. Regulation serves several psychological functions simultaneously: it reduces uncertainty, provides assurance, distributes liability, and establishes common expectations. The EU AI Act, for example, does not only classify AI by risk level — it creates a framework designed to make trust feel justified rather than merely assumed.

Research evidence

Decision subjects value agency, contestability, and inclusion far more than developers typically expect. This finding provides strong empirical support for HITL, SITL, and multi-stakeholder governance. The gap between what developers believe users need and what users actually value is itself a governance risk. Alizadeh et al. (2024). Trust in AI-assisted Decision Making: Perspectives from Those Behind the System and Those for Whom the Decision is Made. ResearchGate.

Trust in AI cannot exceed trust in the institution deploying it. Accountability, oversight, and governance are not administrative burdens — they are part of the user experience. Without them, trust becomes fragile, reputation becomes the risk surface, and adoption remains brittle regardless of technical performance.hms — they trust the signals a system sends through behaviour, design, and communication. When design overpromises capability, trust becomes inflated and safety is compromised. When design under-communicates value, trust stays low and adoption stalls. The designer’s responsibility is to close that gap — ensuring that what the system signals also matches what it can actually do.

Continue the series

Prefer to read the whole series in one go? Download the full white paper — formatted for sharing with teams and policy contacts.

Download the white paper →Prefer to read the whole series in one go? Download the full white paper — formatted for sharing with teams and policy contacts.

Download the white paper →