Part 5: Building Calibrated Trust for Real-World AI Deployments Must Do

From fragmented trust to a coherent socio-technical strategy

Post 5 of 5 — Trust in AI Series

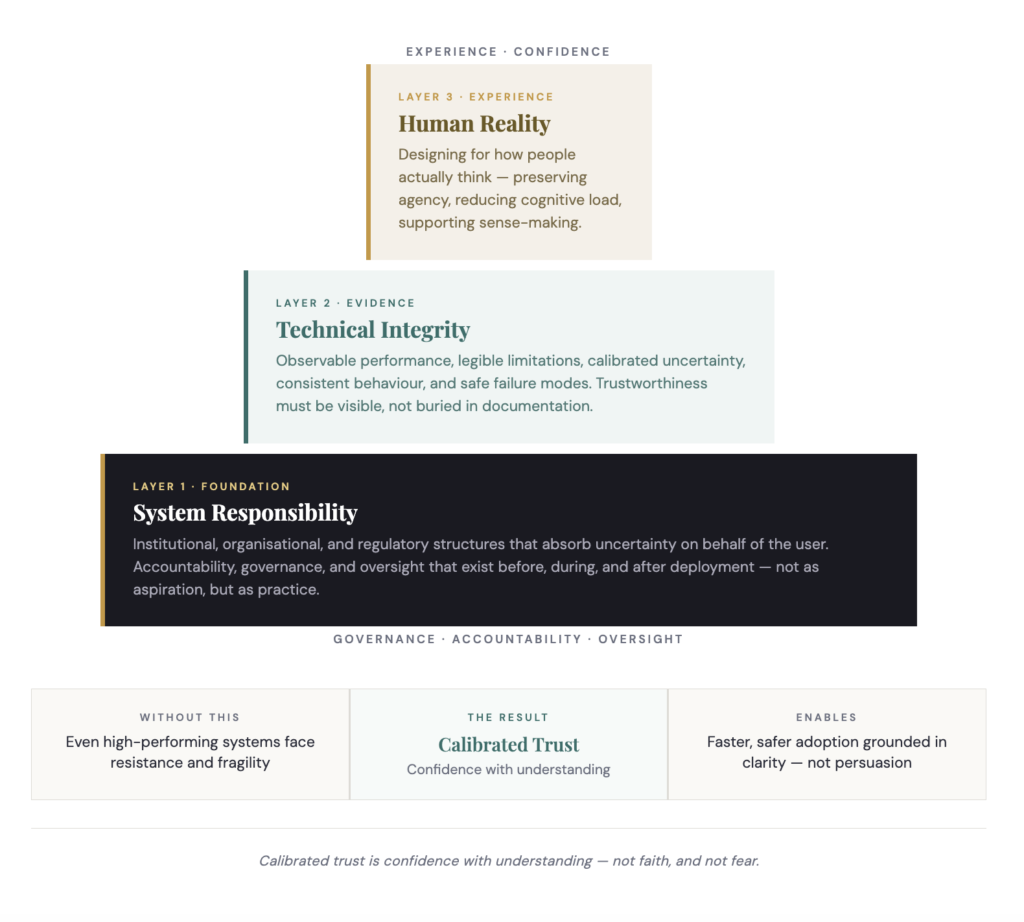

Across the previous four parts, a pattern has emerged. Trust in AI is shaped by human psychology, mediated through design, and sustained or undermined by institutions. When any one of these layers is neglected, trust becomes unstable. When all three align, trust becomes calibrated — and calibrated trust is the condition under which AI can be adopted quickly without compromising safety, dignity, or human agency.

Calibrated trust is not static. It evolves through experience, feedback, error handling, and institutional behaviour. It reflects an accurate mental model of what the system can do, what it cannot do, and how it behaves under uncertainty. Building it requires intentional design across the entire AI lifecycle — not a one-time deployment decision.

5.1 Why Calibration Is the Goal, Not Trust Itself

Much of the public discourse around AI focuses on building trust. This framing is misleading. Trust on its own is not inherently positive. High trust in an unreliable system is dangerous. Low trust in a highly capable system is wasteful. The real objective is alignment: between system capability and user expectations, between design signals and actual performance, between institutional assurances and lived experience.

When trust is misaligned, we see the two failure modes established in Part 2 — over-trust leading to automation bias and misuse, under-trust leading to rejection and workarounds. Calibrated trust avoids both by anchoring confidence in evidence, transparency, and agency rather than persuasion or fear.

5.2 The Three Interdependent Layers

Technical Integrity: Making Trustworthiness Observable

Technical integrity refers to the actual properties of the AI system and how clearly those properties are communicated. This includes robustness across contexts, predictable behaviour, transparent limitations, explainability that supports decision-making, appropriate handling of uncertainty, and safe failure modes. A key insight from trust psychology is that users do not need perfect systems — they need legible ones.

Critically, integrity must be observable. Performance metrics locked in technical documentation do little to shape trust. What matters is how competence is experienced in interaction — does the system behave consistently over time? Are errors explained? Are limitations explicit rather than hidden? Technical integrity creates the conditions for justified trust, but it does not guarantee that users will respond accordingly.

Human Reality: Designing for How People Actually Think

Humans do not evaluate AI systems as rational auditors. They evaluate them as cognitive, emotional, and socially embedded beings. Many deployments fail not because the system is flawed but because it was designed for an idealised user who does not exist in practice.

Designing for human reality means preserving agency through overrides and genuine choice, reducing cognitive load rather than increasing it, supporting sense-making rather than simply providing explanation, and allowing users to develop an accurate mental model over time. Education plays a role, but it is not sufficient. Users do not want to become AI experts — they want to know when to rely, when to question, and when to intervene. Good design makes those boundaries visible without demanding constant vigilance.

System Responsibility: Creating Trustable Environments

Even technically sound, well-designed systems remain fragile when the surrounding environment is untrustworthy. System responsibility — taken on by the institutional, organisational, and regulatory structures governing AI — performs a vital psychological function. It absorbs uncertainty on behalf of the user. People are more willing to rely on AI when they believe someone is monitoring the system, errors will be addressed, harm will be acknowledged, and safeguards exist beyond their individual control. In this sense, trust is not merely interpersonal or human-machine — it is institutional.

5.3 Trust as a Dynamic Process

One of the most common mistakes in AI deployment is treating trust as something that can be achieved at launch. Trust is continuously renegotiated throughout adoption — reshaped as users gain experience, systems are updated, use-contexts shift, and errors occur. Calibration changes in response to all of these.

Trust is strengthened not by the absence of failure but by how failure is handled. Transparent acknowledgement, timely correction, and visible learning all contribute to trust recovery. Silence, defensiveness, or blame-shifting do the opposite. Designing for calibrated trust therefore means planning not only for success but for misunderstanding, misuse, edge cases, breakdowns, and the institutional response to them.

5.4 Why Calibrated Trust Enables Faster, Safer Adoption

Calibrated trust appears to slow adoption — in practice, the opposite is true. Systems designed for calibrated trust face less resistance, experience fewer catastrophic failures, integrate more smoothly into workflows, require fewer corrective interventions, and sustain confidence over time. When users understand a system’s boundaries, they are more willing to rely on it. When institutions demonstrate accountability, they reduce fear. When design preserves agency, adoption becomes a choice rather than a mandate.

Rapid adoption does not come from persuasion or pressure. It comes from confidence grounded in clarity.

A Calibrated Trust Checklist for Deployment Teams

Before deploying any AI system into a setting where human decisions are consequential, these questions should have clear, documented answers:

Has the deployment been designed for failure and recovery, not only for successful use?

- Can users articulate what the system is not designed to do?

- Are uncertainty and limitations visible at the point of decision — not buried in documentation?

- Is there a clear override pathway that users feel psychologically safe using?

- Are monitoring, drift detection, and incident response operational — not aspirational?

- Do governance roles have named individual owners, not committees without accountability?

- Are fairness and privacy communicated in user-centred language, not legal jargon?

Research evidence

Trustworthy AI requires alignment between technical robustness, ethical principles, and institutional accountability — none of the three is sufficient alone. The integration of all three is what distinguishes durable, scalable AI adoption from fragile, compliance-driven deployment. Papagiannidis et al. (2025). Responsible Artificial Intelligence Governance: A Review and Research Framework. Science Direct.

Calibrated trust is confidence with understanding — not faith, and not fear. The question is no longer whether we can build AI that performs. It is whether we can build systems that people can rely on for the right reasons — and institutions capable of sustaining that reliance when things go wrong.

You have reached the end of the series

Enjoyed the series? Download the full white paper — all five parts compiled and formatted for sharing with your team or policy contacts.

Download the white paper →Dr Payal Ghatnekar is Technologies Research Programme Manager at Torbay and South Devon NHS Trust, a Certified AI Ethicist, and a specialist in AI governance and behavioural science. She advises NHS England and UKRI on technology adoption and innovation evaluation.