Part 2: Why Humans Miscalibrate AI

The behavioural psychology behind misuse and disuse

Post 2 of 5 — Trust in AI Series

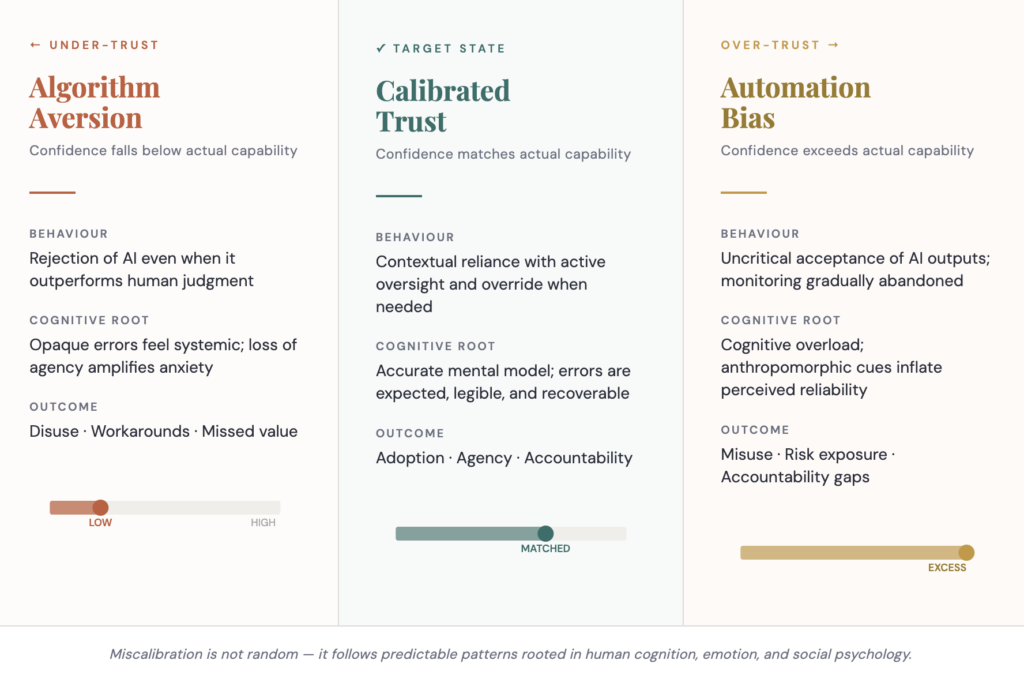

If Part 1 established what it means to trust AI, Part 2 addresses a harder question: why do people so consistently get that trust wrong? Miscalibrated trust is one of the most consequential challenges in AI adoption — and it is not random. It follows predictable behavioural patterns rooted in human cognition, emotion, and social psychology.

The problem is not that people make irrational decisions about AI. The problem is that they make human decisions about AI — and human decision-making is guided by biases, heuristics, and emotional responses that evolved long before machine intelligence existed.

2.1 The Two Failure Modes

Calibrated trust means the level of confidence placed in an AI system accurately reflects its true competence — its reliability, limitations, uncertainty, and contextual risks. In practice, two patterns consistently dominate instead.

Under-trust leads to disuse and algorithm aversion: people reject AI even when it performs demonstrably better than human judgment. Over-trust leads to misuse and automation bias: people accept AI outputs uncritically, even when contradictory evidence is available. Both slow responsible adoption, in opposite directions, and both emerge from deeply human psychological tendencies rather than considered evaluation.

2.2 Under-Trust: Algorithm Aversion

Algorithm aversion is well-documented. Give people an AI model that makes a small, visible mistake, and they lose confidence far more rapidly than they would after the same mistake from a human expert. Human errors feel understandable and correctable. AI errors feel unpredictable, opaque, and systemic — evidence that the system itself may be flawed.

The cognitive roots run deeper than a single bad experience. When people cannot see the reasoning behind a decision, they fill the gap with anxiety — uncertainty interpreted as danger. When agency is removed, mistrust increases dramatically; even a small degree of override capability significantly improves adoption. People also consistently overvalue human judgment in domains that feel morally charged, believing that AI cannot grasp nuance, read situations, or apply ethical reasoning, regardless of whether that belief is technically justified.

2.3 Over-Trust: Automation Bias

At the other extreme, over-trust produces automation bias. This is the tendency to accept AI outputs uncritically, ignore contradictory evidence, and gradually stop monitoring a system that initially demanded attention. This happens because AI presents itself with confidence, reduces cognitive load, creates an illusion of objectivity, and offers fast answers in stressful contexts. In high-pressure environments such as clinical settings, over-reliance increases precisely because people cannot check everything manually. Cognitive overload is one of the strongest predictors of over-reliance.

Several design features unintentionally amplify this. Anthropomorphic signals — warm voices, conversational tone, human-like phrasing — increase perceived competence even when performance does not justify it. Consistency of output, even in a mediocre system, creates perceived reliability which in turn creates trust. Clean, polished interfaces generate a halo effect where accuracy is assumed from aesthetics. And social proof — “if everyone else uses it” — normalises reliance before due diligence has been applied.

2.4 Three Root Mechanisms

Most trust errors trace back to one of three underlying patterns.

Cognitive shortcuts: human brains are not designed for statistical reasoning. They rely on fast intuition, pattern recognition, and emotional inference — while AI operates on probability, optimisation, and data distributions. The mismatch between how humans reason and how machines process creates persistent misalignment.

Emotional meaning-making: people judge AI not in technical terms but emotionally and morally, even when they believe otherwise. A system that feels cold or mechanical loses trust even when accurate. A system that feels warm and socially attuned gains trust even when it occasionally errs.

The need for control: behavioural science consistently shows that people trust systems which preserve autonomy, and distrust grows when agency is removed. Systems that allow overrides, offer explanations, support user judgment, and adapt to user preferences earn more trust independent of raw performance. Control is the psychological antidote to algorithm aversion.

2.5 Trust and Distrust Are Not Opposites

A key insight often overlooked in AI design is that trust and distrust are not mutually exclusive. Trust asks: will this help me? Distrust asks: will this harm me? People can hold both feelings simultaneously — believing AI is good at finding patterns while also fearing it will misjudge their specific case. This duality explains why AI adoption is inherently fragile. A system can be substantially trusted and simultaneously suspected, and that combination shapes behaviour in ways that neither attitude can predict alone.

Research evidence

Many participants trust AI more than humans because AI is perceived as impartial and free from self-interest. Yet in clinical contexts, 34% of radiologists overrode correct AI suggestions following a single visible error — potentially reducing the overall benefit of the technology. The gap between perceived objectivity and actual behaviour under uncertainty is where governance must operate. Gerlich (2024). Exploring Motivators for Trust in the Dichotomy of Human-AI Trust. MDPI Social Sciences, 13(5).

Miscalibrated trust is rarely a user problem. It is the predictable outcome of cognitive overload, uncertainty, and loss of control operating on psychology that evolved to evaluate people, not machines. It is not solved by better algorithms or larger datasets — it is solved by behavioural design, transparent communication, and governance that signals genuine accountability.

Continue the series

Prefer to read the whole series in one go? Download the full white paper

Download the white paper →