Part 3: How AI Design Shapes Trust

Where psychology, interface design, and machine behaviour converge

Post 3 of 5 — Trust in AI Series

If trust in AI begins with psychology and miscalibration emerges from predictable cognitive patterns, Part 3 turns to where trust becomes tangible: design. Design is often treated as the aesthetic layer of AI systems — the colour palette, the interface, the conversational tone. In reality, it is one of the most powerful psychological levers for shaping trust. It is how systems communicate competence, signal intentions, and manage the user’s sense of control.

People do not interact with algorithms. They interact with representations of those algorithms — through interfaces, voices, dashboards, messages, and behaviours. Trust is therefore shaped not only by what a model does but by how it presents itself and how the user experiences that presentation. Design becomes the mediator between machine capability and human perception.

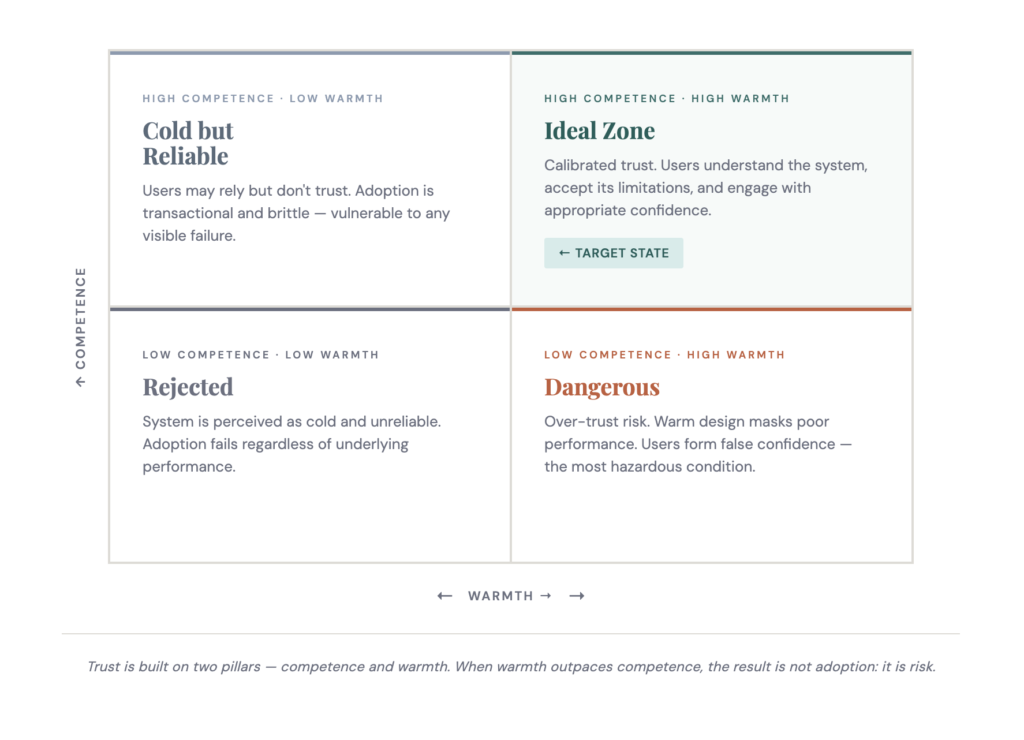

3.1 Two Dimensions of Perceived Trustworthiness

Social psychology has long shown that trustworthiness judgments are built on two pillars: competence (ability, expertise, reliability) and warmth (intentions, fairness, benevolence). These same dimensions apply when people evaluate AI systems, even though AI has no motives or emotions. The moment a system interacts with us, we instinctively assess whether it is capable, treating us fairly, and acting in our interests.

This is why trustworthy AI must be designed to signal both competence and warmth through its behaviour and communication — not through branding alone.

3.2 Competence: What Signals Ability

Users infer competence not through model architecture or accuracy metrics but through observable cues. Consistency and stability signal reliability: even small inconsistencies in phrasing, unexplained corrections, or changes in tone can undermine confidence. How a system handles mistakes matters more than whether it makes them — unexplained errors read as loss of control, while contextualised errors are absorbed as part of expected uncertainty.

Counterintuitively, systems that openly state their limitations — ‘I may be wrong about this,’ ‘confidence is low,’ ‘this is outside my training data’ — are trusted more, not less. Communicating uncertainty demonstrates honesty, calibration, and awareness. Competence is not about perfection. It is about clarity, consistency, and comprehensible reasoning.

3.3 Warmth: What Signals Intention

Even though AI does not possess intentions, humans infer warmth through design. Users want to know who benefits from the system, whether they might be disadvantaged, and whether it treats them equitably. When fairness is hidden, people assume unfairness. When fairness is explained, trust grows.

Privacy cues operate as moral signals — clear data boundaries, consent processes, explanations for data use, and user control over visibility all shape whether users believe the system is acting in their interest. Tone and language function similarly: a system that explains decisions respectfully, acknowledges user concerns, and uses human-centred language is perceived as more trustworthy than one that feels abrupt or imposed. Warmth does not substitute for competence, but it shapes whether people are willing to accept competence when it is demonstrated.

3.4 Anthropomorphism: Powerful, Predictable, and Risky

Anthropomorphism — attributing human-like characteristics to non-human agents — is one of the strongest trust drivers in AI design. People respond positively to natural voices, conversational phrasing, social timing, and polite turn-taking. These signals reduce uncertainty and create familiarity, lowering cognitive load and making interactions feel easier and more predictable. All of this is a prerequisite for trust.

But anthropomorphism is a double-edged instrument. Human-like cues increase adoption speed and engagement, but they can simultaneously cause users to overestimate capability, assume moral intuition, forgive mistakes too readily, and stop monitoring the system. The appropriate use of anthropomorphism is therefore deliberate, not liberal — its purpose is to aid calibration, not inflate it.

3.5 Explainability: Relevance Over Completeness

Explainable AI is often introduced as a technical intervention, but its real impact is psychological. Users want to understand why a system made a recommendation, what factors influenced it, how confident it is, and whether alternatives exist. Explainability succeeds when it reduces cognitive uncertainty — not when it attempts to make users understand model architecture.

The persistent misconception is that more transparency produces more trust. People do not want full transparency because that is overwhelming and often counterproductive. They want relevant clarity: simple, contextual, local explanations that are honest about uncertainty and actionable in the moment. The goal of explainability is cognitive alignment — helping users form an accurate mental model of how the system behaves so they can anticipate its strengths, recognise its limitations, and intervene when needed.

Research evidence

Social and conversational cues significantly increase perceived trustworthiness, even when technical understanding is low. Notably, users were willing to share more sensitive information with neutral, machine-like systems — even though they reported trusting those systems less. This highlights a critical distinction: felt trust and responsible use are not the same thing. Emotional comfort generated by warm design does not reliably translate into safer or more thoughtful behaviour. Gerlich (2024). Exploring Motivators for Trust in the Dichotomy of Human-AI Trust. MDPI Social Sciences, 13(5).

People do not trust algorithms — they trust the signals a system sends through behaviour, design, and communication. When design overpromises capability, trust becomes inflated and safety is compromised. When design under-communicates value, trust stays low and adoption stalls. The designer’s responsibility is to close that gap — ensuring that what the system signals also matches what it can actually do.

Continue the series

Prefer to read the whole series in one go? Download the full white paper — formatted for sharing with teams and policy contacts.

Download the white paper →